After 35 Years of Publishing Standards, Do CAS Standards Make a Difference?

Wendy Neifeld Wheeler

Kelcie Timlin

The College of Saint Rose

Tristan Rios, Hamilton College

Introduction

The Council for the Advancement of Standards (CAS) released the 2015 edition of the blue book in August 2015 (http://www.cas.edu/blog_home). This 9th edition of CAS includes thirteen revised functional areas and guidelines. As higher education professionals know, calls for better quality accountability measures can be heard from stakeholders across the nation—including the federal government, funding agencies, state legislators, accrediting associations, elected officials, parents, and students. Educators within student affairs have been impacted by the increasing demands to provide evidence of outcomes assessment. Upcraft and Schuh (1996) asserted that as “questions of accountability, cost quality access, equity and accreditation combine to make assessment a necessity in higher education, they also make assessment a fundamental necessity in student affairs as well” (p. 7). The inherent goal to continuously make improvements in higher education is an equally compelling factor influencing the necessity of assessment (Keeling, Wall, Underhile, & Dungy, 2008). According to Keeling et al. (2008), “the use of assessment more importantly emerges from the desire of faculty members, student affairs professionals, parents, students and institutional administrators to know, and improve, the quality and effectiveness of higher education” (p. 1).

CAS was launched as a consortium of 11 charter members with the express charge “to advance standards for quality programs and services for students that promote student learning and development and promote the critical role of self- assessment in professional practice” (CAS, 2012, p. v). It has influenced assessment practice in student affairs since its origination and continues to provide significant revisions and updates as with the newest edition’s release in August 2015. A primary goal of CAS is to fulfill the foundational philosophy that “all professional practice should be designed to achieve specific outcomes for students” (CAS, 2012, p. v). According to Komives and Smedick (2012), “utilizing standards to guide program design along with related learning outcomes widely endorsed by professional associations and consortiums can help provide credibility and validity to campus specific programs” (p. 78).

Today, CAS has grown to include forty-one member organizations with representatives who have developed these resources through a collective approach that integrates numerous perspectives across student affairs.

The purpose of this study was to replicate the original research of Arminio and Gochenauer (2004). Their investigation was designed to “assess the impact of CAS on professionals in CAS member associations…the researchers sought to explore who uses CAS standards, how and why they are used and whether CAS standards are associated with enhanced student learning” (Arminio & Gochenauer, 2004, p. 52). To that end, the processes of sampling and data collection were mirrored as much as possible. This article addresses the question “What changes, if any, are there between the results of the 2004 publication by Arminio and Gochenauer and the current study?”

Methodology

In the spring of 2012, the investigators made contact with original author Jan Arminio requesting permission to duplicate the 2004 quantitative study. Researchers also consulted with Laura Dean, then president of CAS, for approval to move forward with the research. Dean, in consultation with her CAS colleagues, agreed that CAS would support the research project. IRB approval was sought and granted from the home institution. Once all approvals were completed, Dean, on behalf of the investigators, emailed an invitation to participate in the study to the designated CAS liaisons (a representative of the member association whose role is to provide transparency between CAS and the association). Ten of 41 professional associations agreed to participate, resulting in an initial sample size of 2,127. The response rate for each of these associations is provided in Table 1.

| Name of Organization | Response Percentage | Response

Count |

| ACPA (American College Personnel Association) | 13% | 41 |

| ACHA (American College Health Association) | 1% | 5 |

| ACUHO-I (Associations of College and University Housing Officers) | 4% | 12 |

| ACUI (Association of College Unions International) | 3% | 8 |

| AHEAD (Association on Higher Education and Disability) | 1% | 3 |

| NACA (National Association for Campus Activities) | 1% | 3 |

| NACADA (National Academic Advising Association) | 37% | 114 |

| NACE (National Association of Colleges and Employers) | 1% | 4 |

| NASAP (National Association of Student Affairs Professionals) | 4% | 13 |

| NODA (National Orientation Directors Association) | 2% | 7 |

| Other | 33% | 99 |

Table 1. Professional Organization Response Rates

The survey consisted of all quantitative multiple-choice questions from the original study (Arminio & Gochenauer, 2004), plus several new multiple-choice questions expanding on the initial question set to 18 items. Twelve of the 18 questions allowed participants to add comments beyond selecting one of the available answer choices. The instrument had three primary purposes: to determine the extent to which the respondent was familiar with and/or utilized the CAS standards; to learn of other assessment tools or methods being used; and to investigate any existing relationship between assessment practices and student learning outcomes. The instrument was uploaded to the online survey system SurveyMonkey (http://www.surveymonkey.com), a free software platform. The researchers analyzed the data using the software included in the SurveyMonkey platform. Frequencies and summaries of data were included in the statistical analysis.

Participants

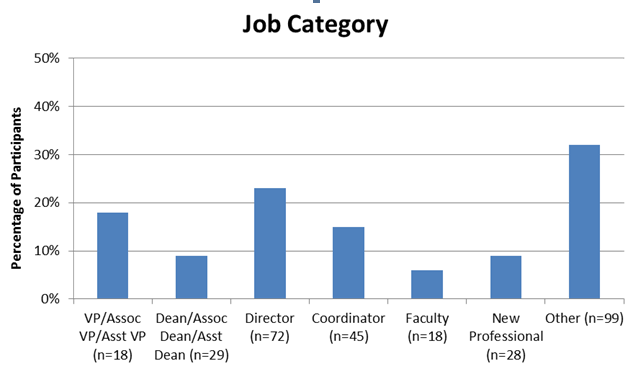

A total of 15% (n=309) of the initial sample size was included in the data analysis. Of the 309 respondents, 36% (n=109) of the individuals indicated they were employed at public 4-year institutions and 24% (n=75) were from private 4-year institutions. Community 2-year schools were represented by 12% (n=36) of the sample. A total of 6% (n=18) of individuals indicated job titles of vice presidents, associate vice presidents and assistant vice presidents, while deans, associate deans and assistant deans were indicated by 9% (n=29) of respondents. Directors made up the majority of the participants at a total of 23% (n=72), followed by coordinators at 15% (n=45). New professionals made up 9% (n=28) of the sample, and faculty represented 6% (n=18) of the sample. The remaining 32% (n=99) of participants indicated other as their job title as described in Table 2.

Table 2. Percentage of Respondents in Each Job Category

Results

Knowledge and Use of CAS

The current instrument included five yes/no questions; the first asking participants if they had previously heard of CAS. A total of 82% (n=254) of the participants indicated that they had heard of CAS. In contrast, Arminio and Gochenauer (2004) reported that 61% (n=890) of the participants in their study had heard of CAS. For those participants who indicated they had heard of CAS in the current study, follow-up questions were designed to investigate the extent of the usage of CAS. Of the 254 respondents who had heard of CAS, 65% (n=158) indicated they were using CAS.

CAS Resources and How They Are Used

Respondents who had indicated they were using CAS (n=158) were asked to identify how they were using the current or past editions of the blue book, the CAS CD, or a particular CAS Self-Assessment Guide (SAG). Of those who use CAS, 81% (n=128) of respondents indicated that the blue book was their primary source of CAS-related information, with 72% (n=114) indicating that SAGs for a particular functional area was their secondary source of information.

Participants were then asked to identify how they use each of the materials. The multiple-choice options included: read it, to conduct self-assessment, for evaluation, as a general reference, as a resource guide for my work, for staff development, to increase institutional support, and for accreditation. The foremost reasons respondents were using the CAS resources were to conduct self-assessment at 41% (n= 65) and as a resource guide at 35% (n=55). Only 11% (n=17) of the respondents selected, to increase institutional support, as a way they used CAS materials.

Arminio and Gochenauer (2004) reported that in their study “more respondents used CAS materials to guide their programs than for self-assessment” (p. 57), which is the reverse of this study’s findings. Arminio and Gochenauer (2004) did not report on whether respondents indicated using CAS to increase institutional support, but they did include the general statement “several respondents noted using CAS standards to document support for increased resources” (p. 59).

The instrument contained a multiple-choice question asking respondents if CAS had influenced their programs and services. The question included eight items for the participants to select, along with the option to write in additional comments. The most frequently identified item was assessing current program, which was reported by 70% (n=111) of the participants, with mission statement and goals following at 44% (n=70). This is highlighted by respondents who shared “CAS Standards were critical in helping me to develop program initiatives, mission statements, and assessment plans” and “CAS provides an essential guide of how to best measure, compose and evaluate one’s departmental programs and services.” It was reported by 9% (n=14) of the responders that CAS influenced budget requests. CAS standards were also credited in influencing “emergency procedures and statements of ethics” in addition to “serving as a guideline for organizational change” based on comments of respondents.

Of the respondents who had indicated they had heard of CAS, 35% (n=80) were not using CAS. The most common reasons given for using an alternative assessment tool included that: the tool was more specific to the program/service (59%; n=47); that the tool was easier to adapt to the program/service (33%; n=26); and that the tool was less complex than CAS (9%; n=7). Similar to the previous question, the instrument allowed participants to specify other reasons for the use of an alternative assessment tool. Respondents indicated via comments that the alternative tools they were using had been “selected by the division of student affairs” or they were “based on the school’s strategic plan.”

Participants, who had indicated they had not heard of CAS, were asked about their knowledge regarding other assessment tool availability. Of the 55 participants who indicated they had not heard of CAS, 32% (n=18) indicated they had heard of alternative assessment tools, but 68% (n=37) had not. Of those who reported they had heard of other assessment tools 71% (n= 13) indicated they were using an alternative. Those participants who indicated they were using an alternative assessment listed the following instruments as examples: Noel-Levitz, Collegiate Learning Assessment, World Class Instruction Design and Assessment, and HESI Admission Assessment Exam. There were also comments that indicated that the alternative assessment instruments being used had been developed specifically for that department by internal staff.

Influence of CAS on Learning

A total of 85% (n=134) of respondents stated there was a connection between learning outcomes and CAS. Arminio and Gochenaur (2004) reported that 24% of the respondents stated they measured learning outcomes generally and of those, 41% indicated a connection between the measured learning outcome and CAS standards. Several respondents provided comments on learning outcomes as it relates to CAS. One participant stated, “I think the learning outcomes are brilliant and will guide our programs at my university,” while another respondent shared “CAS provides an essential list of tools and items that must be included in order to meet minimal to substantial learning outcomes.” The current climate, which has a distinct focus on learning outcomes, may have been an influence that resulted in the increase from the 2004 study to the present.

Of those in the current study who indicated there was a connection between learning outcomes and CAS standards, 64% (n=86) described the connection as strong. The connection was described as vague by 36% (n=48) of respondents, while Arminio and Gochenauer (2004) reported 28% of respondents describing a vague connection between learning outcomes and CAS standards. The primary reason given for learning outcomes not being connected to assessment was because student satisfaction is measured more than learning outcomes.

Positive Comments and Constructive Criticism of CAS

Respondents were asked to reflect generally on CAS and share their thoughts. The most common theme was focused on the collaborative nature of the CAS standards. This is emphasized by the thoughts of one respondent:

CAS is an essential resource to the student affairs profession. It is the ONLY available set of objective standards for a standard of practice in each area of student affairs. The process by which CAS standards are written and vetted is excellent,

and “knowing functional area groups from across campus had to come together to agree on these standards provides even more weight as we work to make change.” The most common challenge of CAS standards was summarized by one respondent: “The sections (of CAS) are somewhat repetitive across functional areas…and the learning outcomes could be more specific to each functional area rather than just discussing broadly.”

Implications

The data collected in this study not only supports and enriches the research of Arminio and Gochenaur (2004), but provides an indicator of sustained knowledge and use of CAS since its introduction in the 1970’s. The current study also indicates that CAS is used primarily to conduct self-assessment and that these assessment activities are directly related to established learning outcomes. Recognizing the potential CAS can play in enhanced assessment practices of student affairs educators, professional organizations may want to consider additional means of providing training on CAS standards for its members.

Department leaders are encouraged to continue intentional discussions about the role of assessment in the day-to-day work of student affairs. To ensure continued commitment to assessment activities in the future, considerable thought and resources need to be part of a department’s strategic planning. If one role of student affairs educators is to create the most effective learning opportunities for students, it is imperative that assessment undertakings hold a place of priority.

Limitations and Future Research

This study has a number of limitations. The overall response rate was low. This may have been impacted by several confounding factors. Specifically, the original study used a paper and pencil survey that was mailed to prospective participants. The current study mirrored the questions from the original study, but used an electronic platform for administration. Shih and Fan (2008) have found “web survey modes generally have lower response rates (about 10% lower on the average) than mail survey modes” (p. 264).

The investigators experienced some unforeseen minor technological complications in the use of SurveyMonkey, thus three slight revisions needed to occur during the distribution of the study. Thus, initial invitees were asked to disregard the first link (to the first survey) and use the subsequent link. Future investigators who choose to further replicate the original research may want to revert back to a paper and pencil version of the instrument.

It is also possible that those who chose not to participate selected out of the survey because they were unfamiliar with CAS and did not feel their responses would be valued. It may benefit investigators to more overtly express that invitees do not need to be versed in CAS to participate in the research. Although the sample size was sufficient, others may want to implement additional strategies to increase the overall sample size.

The participants of this study were exclusively members of a “member association” of CAS, thus potentially skewing the results of the study in the direction of CAS knowledge and use. Concurrently, selection bias may also be a limitation; those who responded may have been more invested in the subject of CAS or have a strong orientation towards the support of CAS. This restricts the generalizability of the findings to a wider range of diverse student affairs professionals who may not belong to these member associations and limits the contextual range of the data. Future investigations may want to consider a comparison group by including professional organizations that are not member associations of CAS.

Conclusion

Colleges and universities continue to work towards improving assessment and accountability practices. Student affairs professionals seeking to advance their programs and services may want to reflect on whether CAS has served as a valuable resource for peers who, in this study, indicated positive experiences with the CAS instrument. CAS provides a vetted tool that can serve as a resource in creating new programs, improving current practices and generally providing an instrument with which to judge our work in an intentional way. It is likely that CAS usage will continue to grow in member organizations and that new functional areas will be added.

In summary, two participants articulated the overall value of CAS in these ways: “I find the CAS standards to be very meaningful and an important framework from which to maintain clear focus about what programs are/are not doing and how to communicate to others what national standards and norms are” and “I think CAS standards are valuable to give our work credibility and as they provide guidance for us as we develop our programs.”

Discussion Questions

- What additional avenues can be utilized to broaden and enhance the use of CAS across divisions of Student Affairs?

- How can a CAS self-assessment study provide additional credibility and validity to the work of student affairs professionals?

- As campuses continue to explore and examine their own cultures of assessment, where does the CAS instrument fit into this picture?

References

Arminio, J. & Gochenaur, P. (2004). After 16 years of publishing standards, do CAS standards make a difference? College Student Affairs Journal, 24(1). 51- 65.

Council for the Advancement of Standards in Higher Education (2012). CAS professional standards for higher education (8th ed.). Washington, D.C.: Author Council for the Advancement of Standards in Higher Education. Retrieved from http://www.cas.edu/blog_home.asp?display=37.

Keeling, R. P., Wall, A. F., Underhile, R. & Dungy, G. J. (2008). Assessment reconsidered: Institutional effectiveness for student success. Washington, DC: National Association of Student Personnel Administrators.

Komives, S. R. & Smedick, W. (2012). Using standards to develop student learning outcomes.

In K. L. Guthrie & L. Osteen (Eds.), Developing Students Leadership Capacity. New Directions for Student Services, no. 140 (pp. 77-88). San Francisco: Jossey-Bass.

Shih, T. H. & Fan, X. (2008). Comparing response rates from Web and mail surveys: A meta- analysis. Field Methods, 20, 249-271.

Upcraft, M. & Schuh, J. (1996). Assessment in student affairs: A guide for practitioners. San Francisco: Jossey-Bass.

About the Authors

Wendy Neifeld Wheeler, Ph.D. is the Dean of Students/Title IX Coordinator at the Albany College of Pharmacy and Health Sciences. She also teaches as an adjunct instructor in the College Student Services Administration program at The College of Saint Rose.

Kelcie Timlin, MS.Ed., is an Assistant Registrar at The College of Saint Rose. Her current interests include Academic Advising and whole student development.

Tristan Rios, MS.Ed., is a Resident Director at Hamilton College. He is interested in pursuing more advanced positions in Residence Life and aspires to be a Director.

Please e-mail inquiries to Wendy Neifeld Wheeler.

Disclaimer

The ideas expressed in this article are not necessarily those of the Developments editorial board or those of ACPA members or the ACPA Governing Board, Leadership, or International Office Staff.